Comprehensive DLP for Rapid Deployment and Results

powered by

Our market-leading DLP, deployed via SaaS, gives you immediate visibility into your organization’s assets, blocking threats to your sensitive data before it’s lost.

SEE A DEMO The Definitive Guide to DLPCompatible with

Protect Critical Data and IP Wherever it Lives

Our industry-leading data protection solution shows you where your sensitive data is located, how it flows in your organization,

and where it is put at risk.

Data Loss Prevention

- Locate, understand, and protect your sensitive data

- Comprehensive capabilities from discovery to monitoring to blocking

- Give soft and hard limits on allowed data actions, educating users while guarding against serious risk

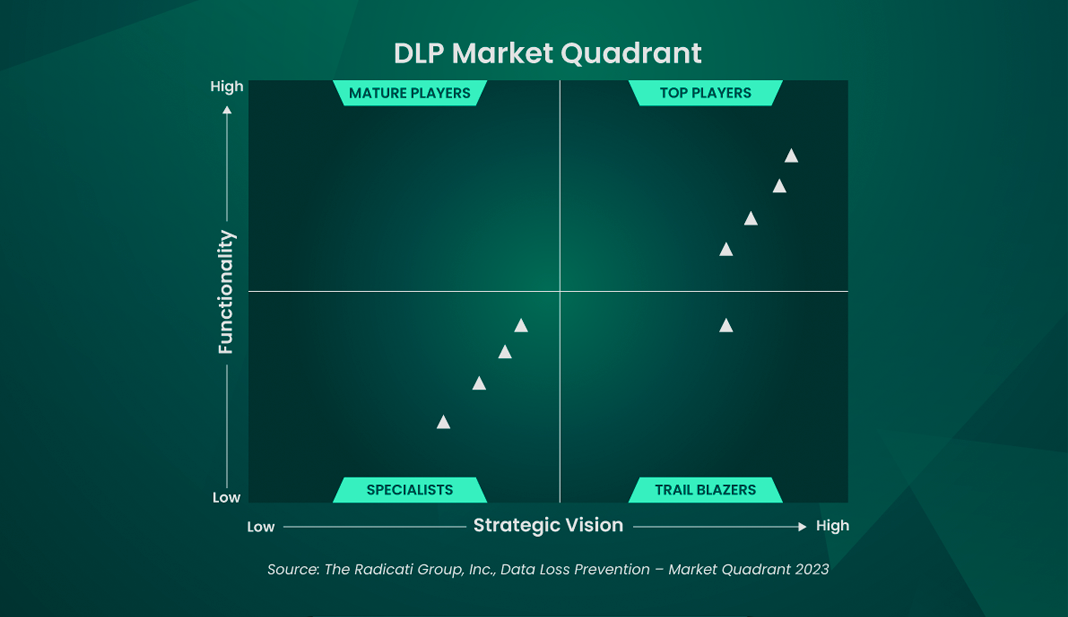

- Market quadrant leading DLP

Managed DLP

- Let our security experts host, administer, and run your data security platform, so you can run your business

- Our team can help deliver instant expertise and best practices, all customized to your data protection environment

- Eliminates burden of staffing shortages in cybersecurity

SaaS & Cloud Data Protection

- Complete visibility to all hardware, software, data creation, data storage and data movement

- Dynamically defends against zero-day malware

- Simplified compliance solutions to maintain the visibility and control you need

Secure Collaboration

- Encrypt and control access to sensitive files wherever they go

- A Zero Trust approach to file sharing, collaboration with anyone – external or internal

- Confidently protect intellectual property, brand, trademark and asset data

A trusted leader in the data protection field.

Market Quadrant Leading DLP

READ THE REPORTGigaOm Radar for DLP

READ THE REPORTDigital Guardian Secure Service Edge

As the workforce becomes more decentralized, with work-from-home and hybrid schedules becoming the norm, the need for cloud and SaaS applications has grown more than expected pre-pandemic.

As the workforce becomes more decentralized, with work-from-home and hybrid schedules becoming the norm, the need for cloud and SaaS applications has grown more than expected pre-pandemic.

Fortra has partnered with Lookout and their Cloud Security Platform to enhance the security coverage of Digital Guardian from endpoint to cloud, protecting our customers’ sensitive data throughout its lifecycle, at rest, in use, or in motion.

DLP for Immediate Visibility and On-Demand Scalability

See Results Quickly

Intuitive, out-of-the-box dashboards provide immediate visibility into threats and help identify data egress.

Greater Deployed Efficacy

Help lay the foundation for your organizations’ future operational security. Faster start-up speeds deployment and enables greater progress to solution maturity.

Protect Data

Discover, monitor, log, and block threats to your data with pre-built policies that can help you avoid gaps and comply with evolving regulatory changes.

Cross-Platform Coverage

Support for hybrid environments provides coverage for Windows, macOS, or Linux operating systems, browsers, and applications.

Interoperable

Digital Guardian operates with existing data classification tools to allow for granular policies and advanced detection, reducing false positives and adding context to data.

Best-in-Class DLP Platform

Intuitive dashboards, reporting and workflows.

Schedule a Demo

See how Digital Guardian can help protect

your critical data wherever it lives.

What’s New at Digital Guardian

Digital Guardian Brochure - 2024

The 2024 Data Loss Prevention Market Quadrant

How Multiple Industries Found Success with Digital Guardian Secure Collaboration

Children's Hospital Protects Protected Health Information with Managed Data Loss Prevention

Getting to Know Fortra's Digital Guardian for Data Loss Prevention (DLP)

GigaOm Radar for DLP

A Key Part of Fortra

Digital Guardian is proud to be part of Fortra’s comprehensive cybersecurity portfolio, and one of the Data Protection family of products. Fortra simplifies today’s complex cybersecurity landscape by bringing complementary products together to solve problems in innovative ways. These integrated, scalable solutions address the fast-changing challenges you face in safeguarding your organization. With the help of the powerful protection from Digital Guardian and others, Fortra is your relentless ally, here for you every step of the way throughout your cybersecurity journey.